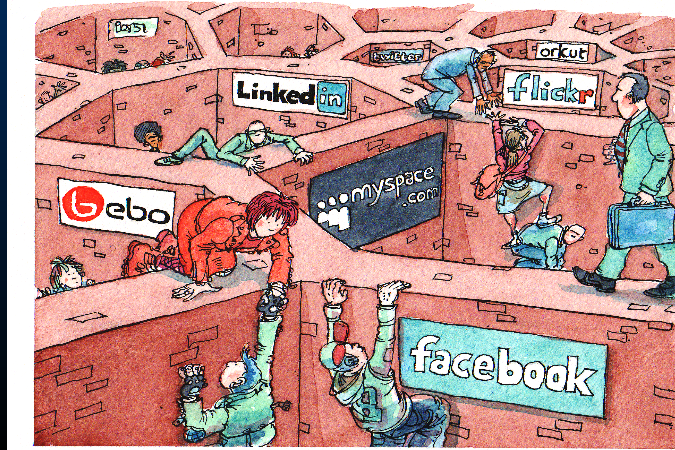

The internet’s public square is privatised, algorithmically controlled for “engagement” over any idea of truth, and placed under the control of a handful of American corporations with no accountability to European citizens or values. The #Fediverse is the most credible existing alternative – but it lacks the shared infrastructure to function as a native commons for news and media. #OMN builds that infrastructure: trust-based, community-controlled, transparent, reversible, and owned by nobody. At €45,000 for a proof of concept, it is one of the cheapest possible investments in the long-term health of European digital public life. If it works – and the technical and social groundwork suggests it will – it becomes the plumbing for a Fediverse that can actually be used to serve democratic societies rather than more #techshit alongside the current #dotcons platforms that undermine them.

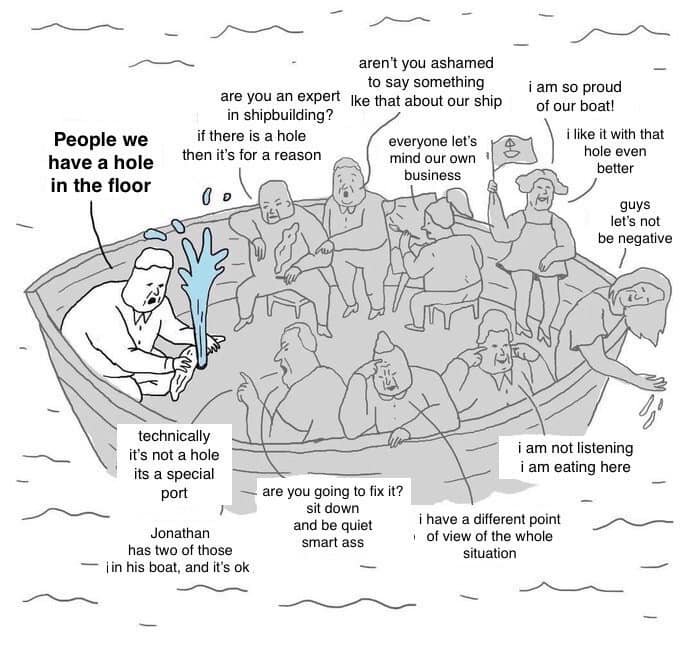

Why this matters – because the #WWW was stolen – Designed as commons at CERN, decentralised, open, nobody in charge. What we have today is instead is five American corporations controlling the information diet of billions of people. Facebook decides what news you see. YouTube’s algorithm decides which voices get amplified. Twitter/X decides who gets banned. None of these decisions are transparent, accountable, or reversible. They are made by private entities in pursuit of control, advertising revenue and engagement metrics – not truth, not public interest, not democracy.

The #Fediverse exists as a rejection of this, it’s the largest real functioning alternative to corporate social media, with millions of people on thousands of servers, federated together, nobody owning the whole thing. It works. It’s growing. But it has a weakness: it’s kinda fragmented at the commons layer. There’s no shared infrastructure for how news and media actually flows across the network in any trustworthy and coherent way.

That’s the gap #OMN fills, but why? Most people don’t think about internet infrastructure. They think about whether they can trust what they read. Whether the news they see is real. Whether the platform they’re on is working for them or selling them. Whether they can do anything when something goes wrong.

Right now the answer to all of those is: it depends entirely on decisions made by people you’ll never meet, for reasons you’ll never know. OMN proposes something different. If your community trusts a source, a trust flow, you see it. If they don’t, you don’t. And that decision is yours, reversible, transparent, locally controlled.

For a journalist in a small country trying to get independent news out, this is the difference between having infrastructure that works for them and being at the mercy of a platform that can deplatform them overnight. For a community archive trying to keep historical memory alive and accessible, this is the difference between dependence on Google’s goodwill and owning your own distribution. For an ordinary person trying to figure out what’s true, this is the difference between an algorithm designed to maximise your outrage and a network shaped by people you actually trust.

Bureaucracies fund things slowly, in ways that often serve existing power structures rather than challenging them. But digital sovereignty is an existential European concern. The EU has spent years trying to regulate American platforms – GDPR, the Digital Services Act, the Digital Markets Act – and the platforms have responded with compliance theatre, token gestures, and armies of lawyers. Regulation of concentrated private power is a losing path. The only actual answer is to build the alternative infrastructure so that people have somewhere else to go. That’s what the NGI Commons Fund is for and what #OMN does.

The EU should not only be funding products, it needs to fund commons infrastructure – the plumbing that nobody owns and everyone can use. Like funding roads rather than funding a logistics company. The outputs are open source, meaning any European media organisation, any local community, any public institution can pick this up and use it. No lock-in. No dependency on a vendor who will be acquired or shut down.

It’s cheap, with the second stage scaling across Europe with institutional partners, building on European strengths. The Fediverse is disproportionately European. Mastodon was built by a German developer. The culture of digital commons, open standards, and public interest technology is stronger in Europe than anywhere else. This project is native to that tradition. It’s not asking Europe to compete with Silicon Valley on Silicon Valley’s terms – it’s asking Europe to build the alternative on its own terms.

The problem #OMN solves is getting worse, not better. Disinformation, algorithmic radicalisation, platform capture of public discourse – these are not abstract threats. They are actively destabilising European democracies. Funding the technical infrastructure for trustworthy, community-controlled information flows is not a nice-to-have. It is digital public health infrastructure.

#KISS

Thematic call: NGI Zero Commons Fund

Organisation: Open Media Network (unincorporated community project, fiscal hosting in Belgium via OpenCollective) Country: United Kingdom General Project Information Proposal name: Trust-Based Media Flows for the Fediverse (#OMN) Website / wiki: https://unite.openworlds.info/Open-Media-Network/Open-Media-Network

Abstract

Can you explain the whole project and its expected outcome(s)?

The Open Media Network (#OMN) is a protocol-driven, federated media infrastructure built on top of ActivityPub and the Emissary codebase (emissary.dev). It addresses a real gap in the current Fediverse: while platforms like Mastodon, PeerTube, and Lemmy are federated at the instance level, there is little coherent cross-platform layer for trust-based content flows, moderation, or news aggregation. Each instance operates largely as its own silo, moderation is hierarchical and per-server, and there is no shared commons model for media distribution across the ecosystem. #OMN proposes a minimal, compostable interaction model – the Five Functions (#5F): Publish, Subscribe, Moderate, Rollback, and Edit Metadata – implemented as a flow layer on top of existing Fediverse infrastructure. Content moves through the network as objects flowing through pipes and holding tanks, filtered and shaped by trust relationships between nodes rather than by opaque algorithms or centralised authority.

The central R&D question is: can trust-based moderation and distribution flows replace algorithmic amplification in a federated news ecosystem? Expected outcomes of this first-stage grant: By Month 3: A technical specification of the flow architecture; a prototype flow service routing ActivityPub objects between two instances; documentation of existing Fediverse flow patterns; early integration with one platform (likely PeerTube). By Month 6: A cross-platform prototype connecting at least two Fediverse systems; a working demonstration of trust-based moderation flows; a public code repository and documentation; and a user-facing prototype via the #makinghistory test environment (https://unite.openworlds.info/Open-Media-Network/MakingHistory). All outputs will be released under recognised open source licences. The project follows the #4opens framework: open data, open source, open standards, and open process.

Have you been involved with projects or organisations relevant to this project before?

Yes. The project lead, Hamish Campbell, has over 40 years of experience in grassroots media and technology, including early involvement with Indymedia – the pioneering open publishing news network – and more than 8 years working directly with the Fediverse and ActivityPub community. The #OMN conceptual framework has been developed over this time and is documented extensively in the project wiki, SocialHub, and at https://hamishcampbell.com. Developer Michael has contributed to #OMN concepts and logic for 10 years and is currently building the #makinghistory reference implementation. Ben, the core developer of Emissary, brings specific expertise in the codebase that will form the technical foundation of the project. Alex brings potential DAT/distributed storage support, and IKA will work on testing and rollout.

Requested Support Requested Amount: €45,000

Explain what the requested budget will be used for. Does the project have other funding sources, both past and present? A breakdown in the main tasks with associated effort is appreciated. Make rates explicit. The budget covers a lean, seed-stage proof of concept with no prior external funding. There are currently no other funding sources. The budget breakdown can be found in the attached PDF (funding). Roles: Hamish Campbell (project lead, coordination, documentation, community engagement) and Michael Saunders (primary development, UX, system logic). Additional contributors (Ben, Alex, IKA) are contributing on a voluntary/community basis during this seed phase. Work packages and approximate effort: WP1 Research & Specification (Months 1–2, ~25% of effort): Architecture design, gap analysis of existing Fediverse tools and flows (PeerTube, Lemmy, Mastodon), and documentation of trust-flow patterns. Output: Technical design document. WP2 Core Development (Months 2–5, ~45% of effort): Flow service implementation on top of Emissary; ActivityPub integration for the #5F model; and a trust-based moderation layer extending Emissary’s existing block/flag capabilities. Output: Working prototype codebase. WP3 UX & Prototype (Months 3–5, ~20% of effort): #makinghistory user interface; dual-layer UX (simple and advanced modes); and WCAG 2.1 accessibility compliance. Output: Testable user prototype. WP4 Testing & Documentation (Months 5–6, ~10% of effort): Community testing and iteration; public documentation and reports; and an open knowledge base of what works and what fails. Output: Public documentation, reports, and reusable design patterns. LINK PDF and wiki

Compare your own project with existing or historical efforts.

The closest existing efforts are: Mastodon’s built-in moderation tools: per-instance block lists and the Fediblock community blocklist. These are instance-level tools – they do not create cross-platform trust flows or shared content aggregation. #OMN operates at the network layer, not the instance layer. Fediseer: a trust registry allowing instances to vouch for each other. Fediseer addresses instance-level reputation but does not implement content flow logic, rollback, or metadata editing as network functions. #OMN builds a compostable flow model on top of the kind of trust signals that Fediseer represents. GNU Social / Friendica: older federated social platforms with some aggregation capability. These predate ActivityPub’s consolidation as the dominant standard and do not address the cross-platform news/media commons use case. Indymedia (1999–2010s): the historical precedent for open publishing federated media. Within the wider project, #OMN explicitly revives and modernises the Indymedia model for the ActivityPub era via the #indymediaback reference implementation, addressing the unfinished work of that tradition. The #makinghistory project grows from, and shares, this same established workflow. Bonfire networks: likely related, but unclear in scope and function. Attempts to install and use it have not clarified its approach. It may be trying to address similar problems, but this remains uncertain. The key difference of #OMN: it is not building a new platform. It is building a protocol-level flow layer that works across existing Fediverse platforms, implementing trust-based content propagation as commons infrastructure rather than as a product. See included PDFs.

What are significant technical challenges you expect to solve during the project?

- Trust flow implementation: Designing and implementing a data model for trust relationships between federated nodes that is lightweight, compostable, and expressible via or alongside ActivityPub. Trust is local and subjective – the system must allow different communities to apply different trust filters to the same content flow without requiring global consensus.

- Rollback across federated state: Implementing the rollback function (re-evaluating and reshaping historical content visibility) in a distributed system where content has already propagated to multiple nodes. This requires a time-aware, local re-indexing approach rather than a global delete mechanism.

- Cross-platform content normalisation: Aggregating content objects from Mastodon (short-form social), PeerTube (video), and Lemmy (forum) into a common JSON-LD content model with a consistent trust trail, despite these platforms having different ActivityPub implementations and object schemas.

- Search actors as push feeds: Implementing the “content finds you” model – where a defined search query becomes a persistent ActivityPub actor that pushes matching new content to subscribers – requires extending Emissary’s existing subscribable search engine capability.

Describe the ecosystem of the project, and how you will engage with relevant actors and promote the outcomes.

The primary ecosystem is the Fediverse: the network of federated, open-source social platforms running ActivityPub, including Mastodon, PeerTube, Lemmy, Friendica, and many others. This ecosystem has grown substantially (estimated 10+ million active users across thousands of instances) but remains technically fragmented at the commons/media layer. The project builds directly on the Emissary codebase (https://emissary.dev), an existing ActivityPub-native Go application. Engagement with the Emissary community is embedded in the team through Ben’s mentoring role.

Wider ecosystem engagement:

The project will contribute design patterns and documentation back to the broader Fediverse developer community via public code repositories, the project wiki, and events. The #makinghistory test phase connects us to existing archives such as Bishupsgate, Maydyroom, the Peace Museum, and the Campbell Family Archive, providing access to extensive datasets as well as outreach to their administrators and users. The five community events included in the budget are specifically designed to recruit contributors, gather real-world feedback, and expand the network of participating nodes.

Promotion of outcomes:

Outcomes will be shared through the Fediverse itself (maintaining an active presence on ActivityPub-native platforms and legacy social media), via open-licensed documentation, and through NGI/NLnet networks and events. This first-stage grant is explicitly designed as a seed and proof-of-concept phase, with a larger second-stage proposal planned to deliver a fully production-ready system once the core architecture is validated.

See attached PDFs.

Powered by Forgejo

Would like to thank all the meany people who helped with this.