People think in groups. That’s normal. The mistake isn’t group thinking itself, it’s pretending we’re all isolated individuals while still acting through tribes, identities, and social blocs. A lot of today’s “common sense” comes from the #stupidindividualism group mindset. We are encouraged to see every problem through individual choices rather than collective realities. The real question isn’t “how do we stop group thinking?” It’s “what do we do with it?”

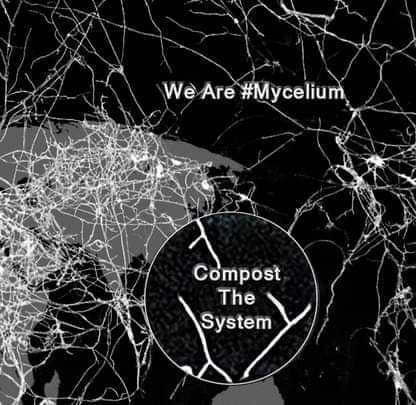

This mess is something we need to compost – in movements, communities, and alternative projects, we need language to describe the different forces shaping what happens, without shared vocabulary, patterns remain invisible. People experience the same problems repeatedly, but each incident looks like an individual conflict rather than part of a wider social mess making. Within #OMN hashtag story, we already have some useful terms.

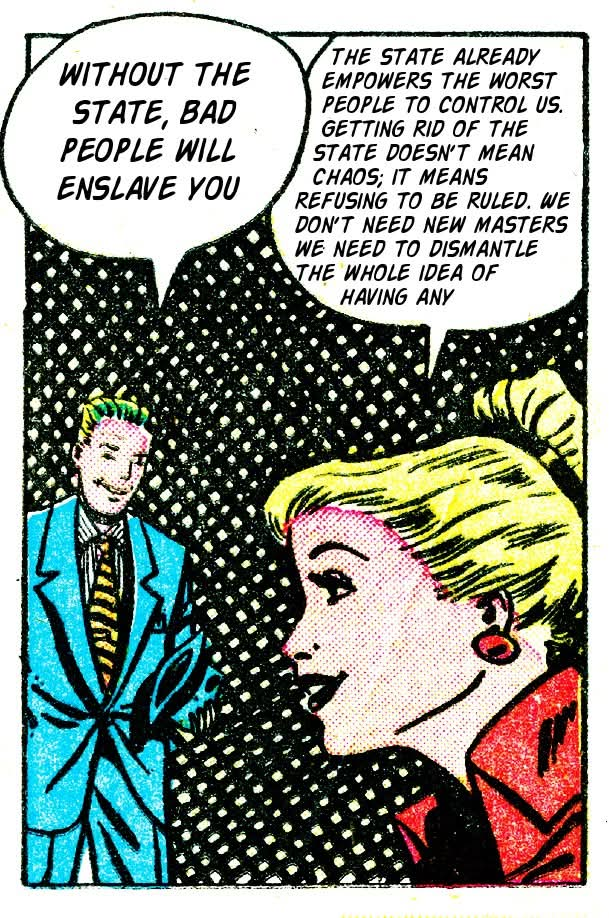

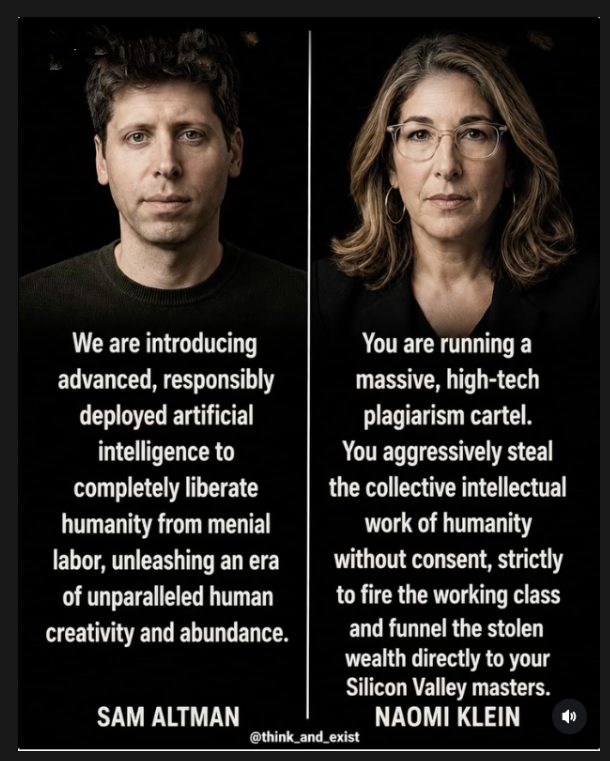

- #nastyfew – power from above. The #nastyfew are the obvious actors who concentrated power of tech, business, political, and institutional elitists. The people who shape systems through money, ownership, influence, and formal authority. They are easier to identify because their power is visible. The #nastyfew don’t usually pretend not to have power, their influence comes from controlling resources, platforms, laws, infrastructure, and narratives. This is the traditional problem of hierarchy.

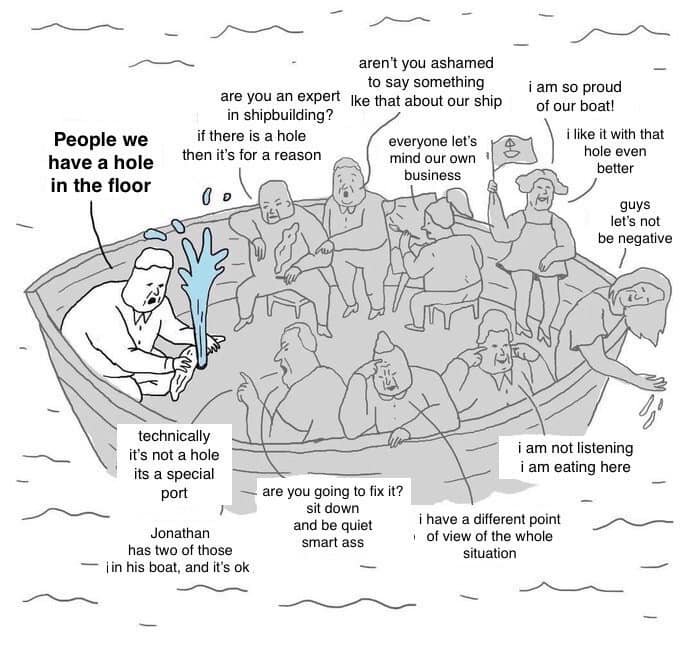

- #fluffy – conflict avoidance, the comfortable side of activism and community organising. The people who want harmony, inclusion, and safety – often good things – but always at the cost of avoiding difficult conversations, uncomfortable truths, or necessary conflicts. The fluffy crew are not the enemy, we need this side as movements without care become brittle, aggressive, and unsustainable. The problem is when #fluffy becomes a substitute for action, and keeping things pleasant becomes more important than addressing what is actually happening.

#fluffy – comfortable, non-threatening, conflict-avoiding activism. Well understood in context. #spiky – confrontational, direct, willing to cause friction. Debate – is the thing that is to often missing, and holds the power.

But there is another pattern we need to compost, that does not fit either category. Something more subtle, the missing category is the weaponised nice person. There is a difference between being kind and using kindness as a tool of control. There is a difference between creating a welcoming space and using the language of welcome to #block challenge. This is the person who performs niceness while quietly enforcing conformity.

These people are in every movement, every activist camp, they use, politeness rules, social reputation, community trust, emotional pressure and claims of protecting the group …as mechanisms to block criticism, avoid accountability, and preserve existing power. They are not the #nastyfew as they are not openly dominating from above, and often appear as the opposite, they look caring, sound reasonable.

They say “We need to be constructive.” “We don’t want conflict.” “That isn’t the right way to say it.” “We need to protect the community.” Sometimes those statements are valid, but often they are used as a shield against anything disruptive, challenging, or genuinely new. This is where we need a #hashtag for.

The gap is specific: the person who performs niceness or fluffiness as a weapon – who uses social respectability, politeness norms, or community goodwill as a way to enforce conformity, block challenge, and protect their own position. Not the #nastyfew (they’re openly powerful) and not simply #fluffy (that’s just timid). This is the vile fluffy – nice on the surface, actively harmful underneath.

Maybe #nicenasty describes the contradiction. Nice on the surface, nasty in effect. The problem is not kindness, the problem is when kindness becomes a performance used to maintain control. A #nicenasty dynamic appears in spaces that claim to be open: activist groups, community organisations, open source projects, alternative media spaces and wider social movements. The language is horizontal, but the behaviour becomes quietly hierarchical. Instead of “you cannot do this because I have power”, it becomes “you cannot do this because you are harming the community.” The result can be the same – blocking change. #nicenasty -. Has rhythm, easy to remember, does the job. The inversion is the point.

#velvetblock – the mechanism, describes the process itself, a velvet surface hiding a hard barrier. The door is not slammed, people are not openly excluded. Instead, they are slowly redirected, delayed, discouraged, or socially isolated until the challenge disappears. The damage remains polite, the outcome remains the same. #velvetblock – soft surface, hard obstruction. More descriptive of the mechanism.

#fluffygate- implies gatekeeping behind a fluffy front. A bit clunky.

#pratocracy – the rule of prats. Funny but loses the specific nice/nasty dynamic.

#softpower – already taken in international relations, would cause confusion.

#vilefluff – pairs well with #nicenasty tag, keep it in the vocabulary for the spiky people.

#nicenasty is maybe the strongest – it’s immediately, has no baggage, and does what a hashtag should do: compress a complex dynamic into something people recognise and use to organise the movement. The question is whether one tag or two. #nastyfew for power from above, #nicenasty for obstruction from within the community itself, #fluffy for the timid. A clean three-part vocabulary?

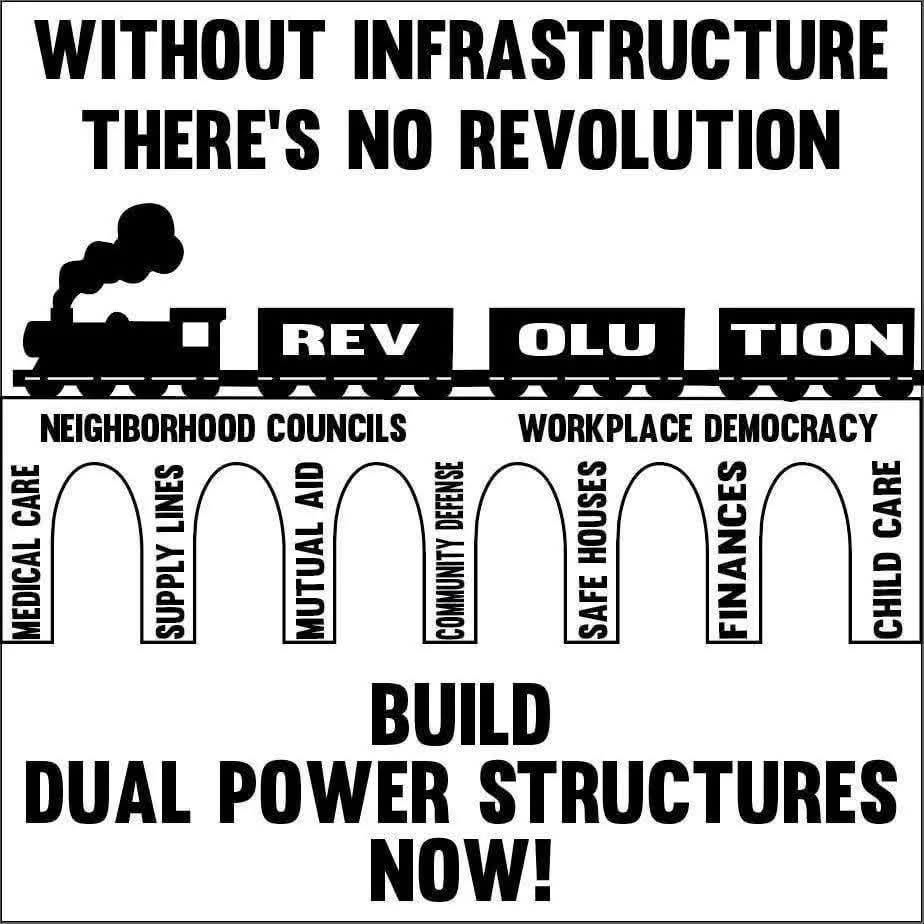

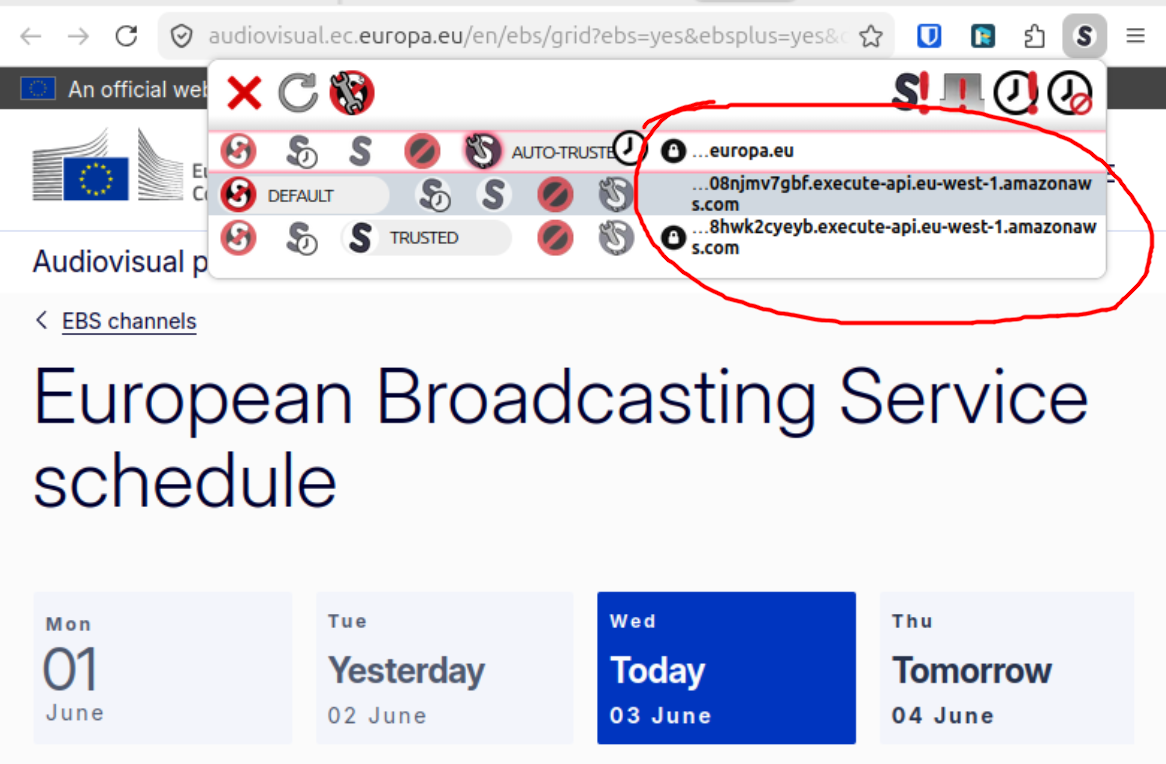

Why this matters for #OMN – The #openweb and grassroots organising depend on the ability to challenge, fork, experiment, and build alternatives. But communities can reproduce the same problems as institutions. They can create informal gatekeepers, they can protect status to confuse stability with success. The challenge is not just resisting the #nastyfew, it is also recognising the internal patterns that stop movements growing. A healthy ecosystem needs all three understandings.

#nastyfew – Power concentrated at the top.

#fluffy – Care, connection, and social glue, but with the risk of avoiding necessary conflict.

#nicenasty – Soft power used internally to block challenge while appearing caring.

This gives us a #KISS story path. Because not every barrier looks like oppression, sometimes the strongest walls are built out of good intentions. The answer is not to reject kindness, more its is separating genuine care from control disguised as care. Any native path needs both:

#fluffy to keep people connected.

#spiky to challenge what needs challenging.

And the awareness to recognise when #nicenasty is #blocking

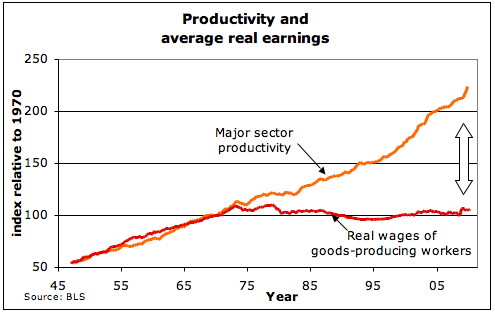

A bit of theory on how this mess comes about – puppets dancing on strings – how consent is manufactured rather than just how force is applied, ideology isn’t only ideas floating free, it’s rooted in real social and economic structures. let’s look at some views of this:

Lukács – reification and false consciousness, how capitalism makes its own social relations appear natural and inevitable, like facts of nature rather than human constructions.

Gramsci – hegemony, how ruling class ideas become “common sense,” absorbed so deeply into everyday life that they no longer need to be enforced, because people enforce them on themselves.

Althusser – ideology and ideological state apparatuses, how institutions (schools, media, religion) reproduce the conditions that make capitalism feel like the only possible reality.

So where does the current dead #postmodernism confusion comes from – this rotten path also talks about constructed realities, fictions experienced as truth, and the critique of “grand narratives.” So there’s surface overlap. But the difference is Marxism says ideology can be exposed and overcome through collective understanding and political struggle – there’s a real underneath the false consciousness. Postmodernism says there’s no stable real to appeal to – all truths are partial, constructed, and contested all the way down so would be far more sceptical about whether “exposing” ideology gets you anywhere.

Compost or rot – you choose, we need a spade #OMN

What do people think about this, especially in the light of Hannah Arendt’s work?

At what point does neutrality become complicity? Arendt‘s writing is useful because she was suspicious of both ideological certainty and political passivity. Her writing on totalitarianism and the “banality of evil” wasn’t about monsters. It was about ordinary people stepping back from judgement and responsibility, retreating into obedience, routine, or disengagement while harmful systems expanded around them.

From this, the danger is not simply taking the wrong side. The danger is refusing to judge at all. At the same time, Arendt valued the public sphere as a space of plurality, where different people could meet, speak, disagree, and act together. Politics, at its best, was not about enforcing a single truth but about creating a shared world despite differences.

This creates a tension for projects like the #OMN as we often talk about mediation, bridge-building, and creating spaces where people can communicate across divides. But what happens when the issue is no longer a disagreement between equals, but questions of exclusion, inequality, violence, and authoritarian power?

Arendt argues that judgement itself is the responsibility. Not blind partisanship, but the willingness to think, judge, and act in public rather than retreat into private neutrality. Perhaps the distinction is between active mediation and passive fence-sitting.