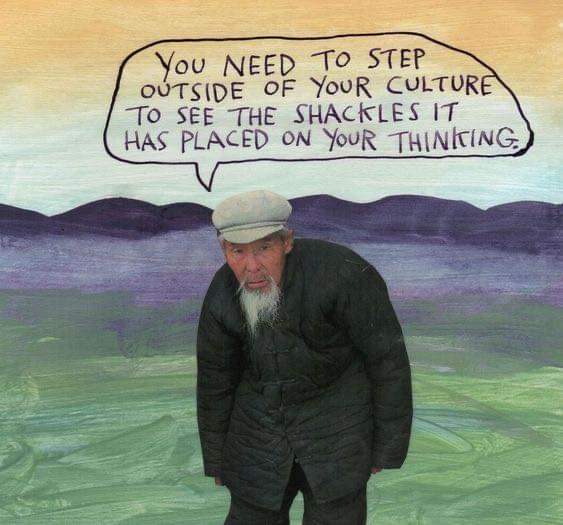

There’s a common confusion, often pushed by well-meaning #fashernistas, about how change actually happens. They love theory. They love to talk about change. But when it comes to doing, things go sideways. Why? Because good horizontalists know: theory must emerge from practice, not the other way around.

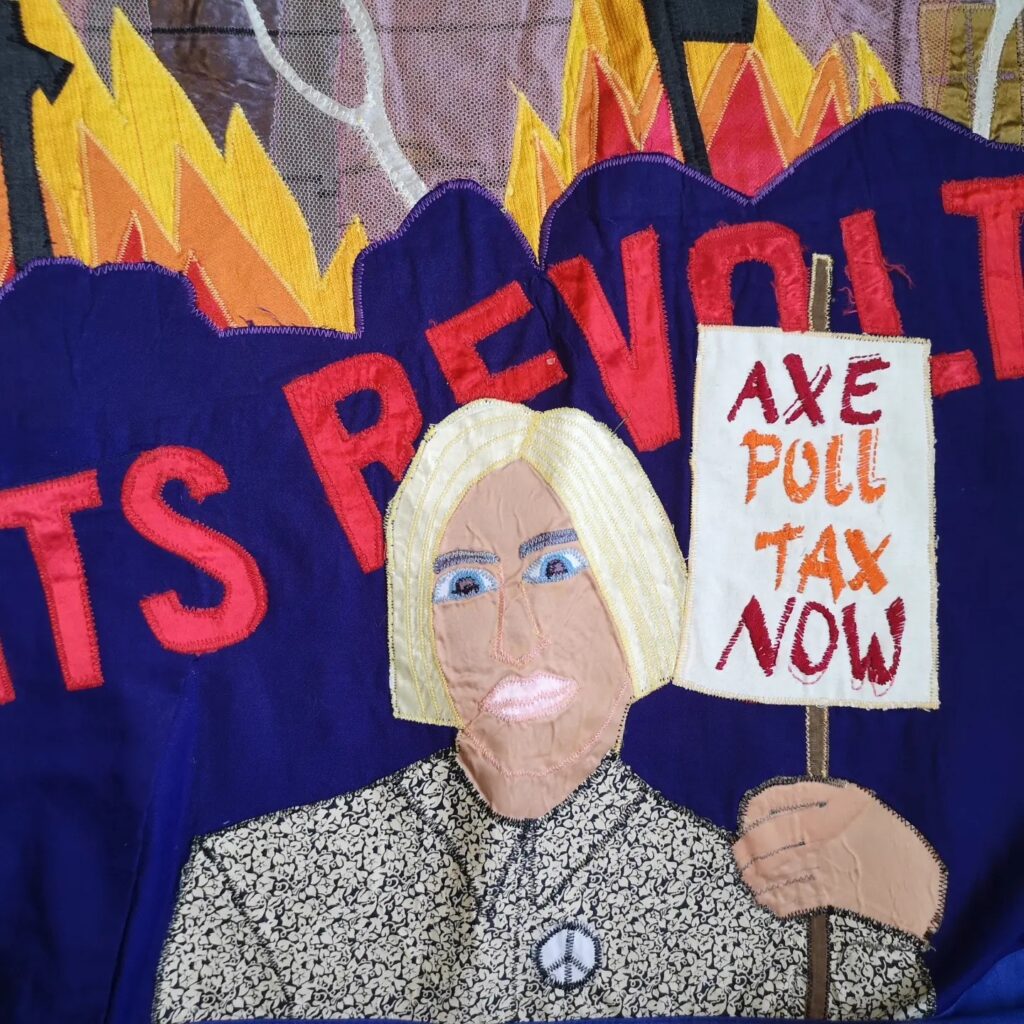

At the root of radical practice is #DIY culture. We don’t wait for perfect theory or academic approval. We get our hands dirty. We try things, we fail, we try again. Through this, we build theory that is grounded in reality, not floating above it.

The Problem with Top-Down Theory is that when you start from theory alone, disconnected from lived experience, you go ground and round in abstract circles. Then, inevitably, someone tries to apply this neatly wrapped theoretical package as a “solution” to the mess we’re in… and it breaks everything.

At best, this leads to another layer of #techshit to compost. At worst, it becomes academic wank, beautifully phrased but practically useless, imposed on grassroots organisers trying to get real work done.

We’re tired of clearing up after these failed interventions. Focus matters. Resources are scarce. Energy is precious. The practice-first approach, is why we’re doing something different with projects like:

#OMN (Open Media Network): building tools from the bottom up, with open metadata flows and radical trust.

#Indymediaback: rebooting a proven model of grassroots publishing that worked, updated for today.

#OGB (Open Governance Body): prototyping governance based on lived collaboration, not abstract debate.All of this is theory grown from practice. None of it came from think tanks or grant-funded consultants. It came from kitchens, camps, squats, TAZs, mailing lists, and dirty hands. If you want to be part of this work, great. But please engage with it as it is. Bring your experience, your skills, your curiosity. But don’t dump disconnected theory on it. Don’t smother the flow with top-down frameworks or overthought abstractions.

We need people to join the flow of practice. Let the theory emerge where it’s needed, like compost, growing what feeds us. So: Start where your feet are. Build from what works. Trust the process of doing. And please, don’t push mess our way. We’ve got enough of that already.

Let’s build something real. Together.

#DIY #grassroots #4opens #KISS #deathcult #nothingnew